The Key to Building Smarter, Human-Centric AI Systems

Artificial intelligence is evolving fast. But building AI that is truly useful, safe, and human-like is still a challenge.

Many AI models can generate answers. Not all of them understand what users actually need. This gap between accuracy and usefulness is where Reinforcement Learning from Human Feedback (RLHF) becomes essential.

For companies developing AI solutions, RLHF is no longer optional. It is the foundation for creating systems that align with human expectations and deliver real-world value.

What is Reinforcement Learning from Human Feedback (RLHF)?

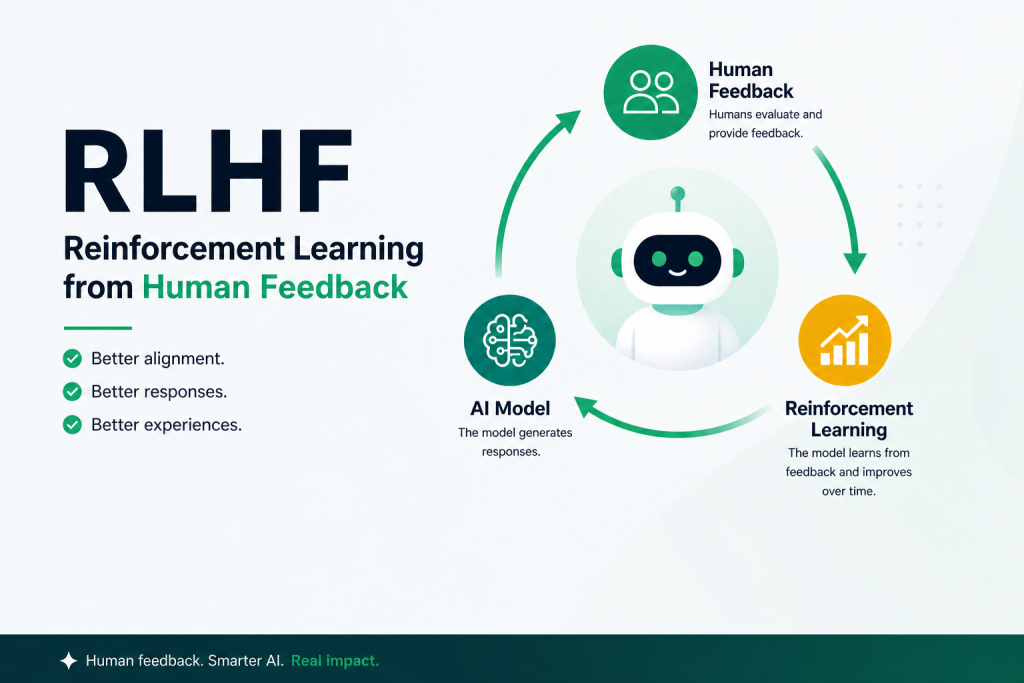

Reinforcement Learning from Human Feedback (RLHF) is a training approach that improves AI models by incorporating human input into the learning process.

Instead of relying only on static datasets, RLHF allows models to learn from human preferences, rankings, and feedback. This helps AI systems understand not just what is correct but what is helpful, safe, and contextually appropriate.

In simple terms, RLHF teaches AI to behave in ways people expect.

Why Traditional AI Training Falls Short

Most machine learning models are trained using supervised learning. They learn from labeled datasets where each input has a predefined correct output.

While effective for structured tasks, this approach has limitations in real-world scenarios.

For example:

- A chatbot may give a technically correct answer that feels confusing

- A content generator may produce text that lacks clarity or tone

- A model may fail to recognize unsafe or sensitive responses

These challenges arise because real-world interactions are subjective. There is no single perfect answer. There are better and worse responses.

How RLHF Works

RLHF enhances AI training by introducing a human-in-the-loop approach. The process typically involves three stages.

First, the model is pretrained on large datasets to understand language patterns, context, and structure. This forms the base intelligence of the AI system.

Next, human reviewers evaluate multiple outputs generated by the model. They rank responses based on clarity, usefulness, tone, and safety. This creates a preference dataset that reflects real human expectations.

Finally, reinforcement learning is applied. The model learns from these rankings and adjusts its behavior accordingly. Over time, it begins to generate responses that align more closely with human preferences.

Key Benefits of RLHF in AI Development

1. Improved Response Quality

RLHF ensures that AI outputs are not just accurate but also clear, relevant, and easy to understand.

2. Human-Centric AI Systems

By learning from human feedback, AI becomes more aligned with real user needs and expectations.

3. Enhanced Safety and Compliance

RLHF helps reduce harmful, biased, or inappropriate outputs, making AI systems safer for deployment.

4. Better User Experience

AI interactions feel more natural, conversational, and engaging, leading to higher user satisfaction.

Real-World Applications of RLHF

RLHF is widely used across industries where AI interacts directly with users.

- Conversational AI and chatbots

- Generative AI tools for content creation

- Healthcare AI systems require sensitive responses

- Financial AI applications demanding accuracy and compliance

- E-commerce recommendation systems

In all these use cases, aligning AI outputs with human expectations is critical.

Why RLHF Matters for AI Companies

For businesses building AI-driven products, RLHF offers a clear advantage.

It enables organizations to:

- Deliver more reliable and trustworthy AI solutions

- Improve customer engagement through better interactions

- Reduce risks related to unsafe or biased outputs

- Build AI systems that reflect brand tone and communication style

In a market where user experience defines success, RLHF directly impacts product quality and adoption.

The Role of High-Quality Data in RLHF

The effectiveness of RLHF depends heavily on the quality of human feedback and annotated data. Poor-quality data leads to poor model behavior.

This is where expert data annotation becomes critical.

Creating high-quality RLHF datasets requires:

- Skilled human annotators

- Clear evaluation guidelines

- Multi-level quality assurance

- Domain-specific expertise

Without these elements, even advanced AI models struggle to perform effectively.

How Infolks Supports RLHF and AI Training

At Infolks, we specialize in delivering high-quality training datasets that power advanced AI models, including those using RLHF.

Our expertise includes:

- Human-in-the-loop data annotation for AI training

- Text, image, audio, and video labeling

- Preference ranking and evaluation datasets for RLHF

- Domain-specific annotation for healthcare, finance, retail, and more

- Multi-level quality assurance for maximum accuracy

With ISO-certified processes and a strong focus on data security, we ensure that your AI models are trained on reliable, high-quality data.

The Future of RLHF

As AI continues to evolve, RLHF will play an even more important role in shaping intelligent systems.

Future advancements will focus on:

- Personalized AI experiences based on user behavior

- Continuous learning from real-time feedback

- Improved alignment with ethical and regulatory standards

Organizations that invest in RLHF today will be better positioned to build AI systems that are not only powerful but also trusted.

Final Thoughts

Reinforcement learning from human feedback is transforming how AI systems are trained and deployed. It connects machine intelligence with real human expectations.

For businesses building AI solutions, the focus should go beyond automation. It should include alignment, quality, and user experience.

RLHF makes that possible.